Paper Review: "Sim-to-Real: Learning Agile Locomotion For Quadruped Robots"

What is this paper regarding?

This paper demonstrates the overall process of ‘Learning Agile Locomotion for Quadruped Robots’, focusing on reducing the sim-to-real gap—the discrepancies between the physics simulator and the real world. The authors implement agile locomotion gaits, such as trotting and galloping, by controlling two major factors that impact the reality gap:

- High Simulator Fidelity: improving system identification, actuator modeling, and latency simulation.

- Robust Controller: randomizing the physical environment, designing a compact observation space, and adding perturbations.

Especially in Reinforcement Learning (RL), narrowing this gap is crucial because control policies learned in a simulator are directly deployed to hardware. In this process, low simulation fidelity can lead to deployment failures in the real world.

Key Contributions

- End-to-End Pipeline: Established a comprehensive process for deploying RL models from simulation to hardware.

- Sim-to-Real Analysis: Analyzed major factors that influence the reality gap and proposed solutions.

- Zero-Shot Learning: Demonstrated that agile locomotion gaits can be learned in simulation and deployed without further fine-tuning on the real robot.

Background: Why I read this paper

Reinforcement Learning is a powerful solution for locomotive systems (e.g., quadruped robots, bipedal robots, and humanoids) because it provides robustness against environmental perturbations. Recently, RL has become an inevitable method for learning agile locomotion.

I am currently working on a personal project to build a custom locomotive system, aiming to develop a bipedal or humanoid robot in the near future. To control my robot dynamically and agilely, I plan to adapt deep reinforcement learning techniques for the high-level controller. This requires a deep understanding of mitigating the sim-to-real gap and designing robust controllers.

For these reasons, this paper aligns perfectly with my engineering goals. I have re-categorized the factors that impact the sim-to-real gap to help me improve my own simulation environment and build a robust controller.

Critical Thinking & Takeaway

Overcoming the Reality Gap

The “Reality Gap” is a major obstacle to applying deep RL in robotics.

- In system identification, inaccurate actuator models and a lack of latency modeling are two primary causes of this gap.

- A robust controller can be improved by injecting noise, perturbing the simulated robot, and leveraging multiple simulators using domain randomization and dynamics randomization.

Robot Platform And Physics Simulation

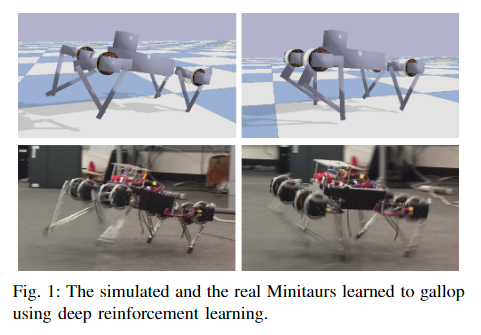

The robot platform is the Minitaur from Ghost Robotics (Figure 1 bottom), a quadruped robot with eight direct-drive actuators.

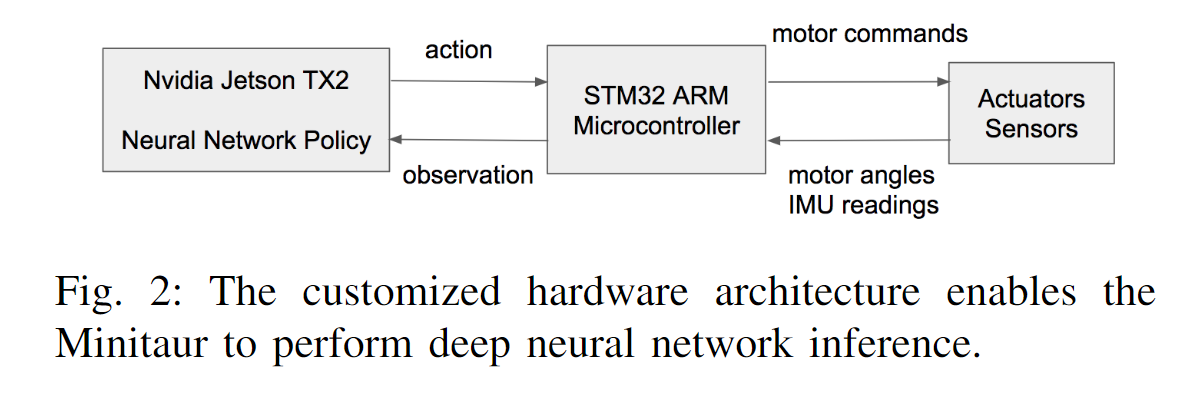

The Nvidia Jetson TX2 acts as the high-level controller, performing neural network policies learned from deep RL. The STM32 ARM microcontroller serves as the low-level controller, executing position control for the motors. The TX2 interfaces with the microcontroller via UART communication. At every control step, sensor measurements are collected at the microcontroller and sent back to the TX2, where they are fed into a neural network policy to decide the actions. These actions are then transmitted to the microcontroller and executed by the actuators (Figure 2).

Learning Locomotive Controller - Background

This paper formulates locomotion control as a Partially Observable Markov Decision Process (POMDP) and solves it using a policy gradient method.

An MDP is a tuple $(S, A, r, D, P_{sas’}, \gamma)$ where:

- $S$ is the state space; $A$ is the action space

- $r$ is the reward function

- $D$ is the distribution of initial states $s_0$

- $P_{sas’}$ is the transition probability

- $\gamma \in [0, 1]$ is the discount factor

The problem is partially observable because certain states, such as the Minitaur’s base position and foot contact forces, are not accessible due to a lack of sensors. Thus, at every control step, a partial observation $o \in O$, rather than a complete state $s \in S$, is observed. Reinforcement learning optimizes a policy $\pi : O \mapsto A$ that maximizes the expected return (accumulated rewards) $R$.

\[\pi^* = \arg \max_\pi E_{s_0 \sim D}[R_\pi(s_0)] \tag{1}\]Learning Locomotive Controller - Reward Function

The reward function is designed to encourage faster forward running speed while penalizing high energy consumption.

\[r = (\mathbf{p}_n - \mathbf{p}_{n-1}) \cdot \mathbf{d} - w \Delta t |\boldsymbol{\tau}_n \cdot \dot{\mathbf{q}}_n| \tag{2}\]where:

- $\mathbf{p}n$ and $\mathbf{p}{n-1}$ are the positions of the Minitaur’s base at the current and previous time steps, respectively.

- $\mathbf{d}$ is the desired running direction.

- $\Delta t$ is the time step.

- $\boldsymbol{\tau}$ are the motor torques.

- $\dot{\mathbf{q}}$ are the motor velocities.

The first term measures the running distance toward the desired direction, and the second term measures energy expenditure. $w$ is the weight that balances these two terms.

Narrowing The Reality Gap - Improved Simulation

To ensure the policy learned in simulation transfers to the real robot, simulation fidelity was improved in three key areas:

- Accurate URDF Modeling: The robot was disassembled to measure the precise dimensions, mass, and center of mass for each link. Inertia was estimated based on shape and mass, assuming uniform density.

- Actuator Modeling: The default constraint-based motor model in physics engines often leads to policies that oscillate in the real world. To fix this, a non-linear actuator model was developed. This model accounts for the Torque Saturation characteristic of real DC motors, using a piece-wise linear function to map current to torque more accurately than simple linear equations.

- Latency Modeling: Real-world systems have delays between sensing and actuation (approx. 3ms for the microcontroller and 15-19ms for the high-level controller). The simulation models this latency by keeping a history of observations and interpolating them to mimic the delay found in the physical hardware.

Narrowing The Reality Gap - Robust Controller

Even with an improved simulation, discrepancies remain. A robust controller was trained to handle these uncertainties using the following techniques:

- Dynamics Randomization: During training, physical parameters such as mass (80%-120%), motor friction, battery voltage, and contact friction were randomized at the beginning of each episode. This prevents the policy from overfitting to a specific set of simulation parameters.

- Perturbations: To teach the robot to recover from instability, random perturbation forces (130N - 220N) were applied to the robot’s base every 1.2 seconds during training.

- Compact Observation Space: Noisy sensor data can confuse the policy. The observation space was minimized to include only essential data (Roll, Pitch, base angular velocities, and motor angles), excluding unreliable measurements like the Yaw of the base.

Controller Architecture & Learning Two Gaits

The paper proposes a Hybrid Policy that combines a user-specified open-loop signal with a learned feedback component. This allows the user to guide the gait style while Reinforcement Learning handles the balance control.

\[a(t, o) = \bar{a}(t) + \pi(o) \tag{3}\]- $\bar{a}(t)$ (Open Loop Component): A user-defined reference trajectory (typically a periodic signal).

- $\pi(o)$ (Feedback Component): The policy learned by RL to adjust leg poses based on observations.

Using this architecture, two distinct gaits were learned:

- Galloping (Learning from Scratch):

- Reference: $\bar{a}(t) = 0$ (No user guidance provided).

- Process: The feedback component $\pi(o)$ was given large output bounds, allowing the RL agent to explore and discover the most efficient gait for speed on its own. The result was a natural galloping gait.

- Trotting (User-Guided Learning):

- Reference: $\bar{a}(t)$ was set to a sine wave trajectory to guide the legs into a diagonal pair movement pattern.

- Process: The feedback component $\pi(o)$ was restricted to small bounds (e.g., $\pm 0.25$ rad), forcing the agent to stick to the trotting pattern while learning only the necessary adjustments to maintain balance.

Conclusion

This paper focused on transferable locomotion policies. By using accurate physical models and robust controllers, the authors successfully deployed controllers learned in simulations onto real robots. This paper is particularly informative for me as I learned the overall process of deployment and analyzed the factors that influence the reality gap in locomotive systems.

Reference

Citation: https://doi.org/10.48550/arXiv.1804.10332

NOTE: This paper is available as an open-access paper

Related Posts

Here are some more posts you might like to read next: